ASL Recognition

AI/ML · Computer Vision

Object detection pipeline for ASL hand sign localization and classification, benchmarked across SSD/YOLO/Faster R-CNN.

- AI/ML

- Computer Vision

- Python

- YOLOv5

- Detectron2

- TensorFlow OD

- Roboflow

Summary

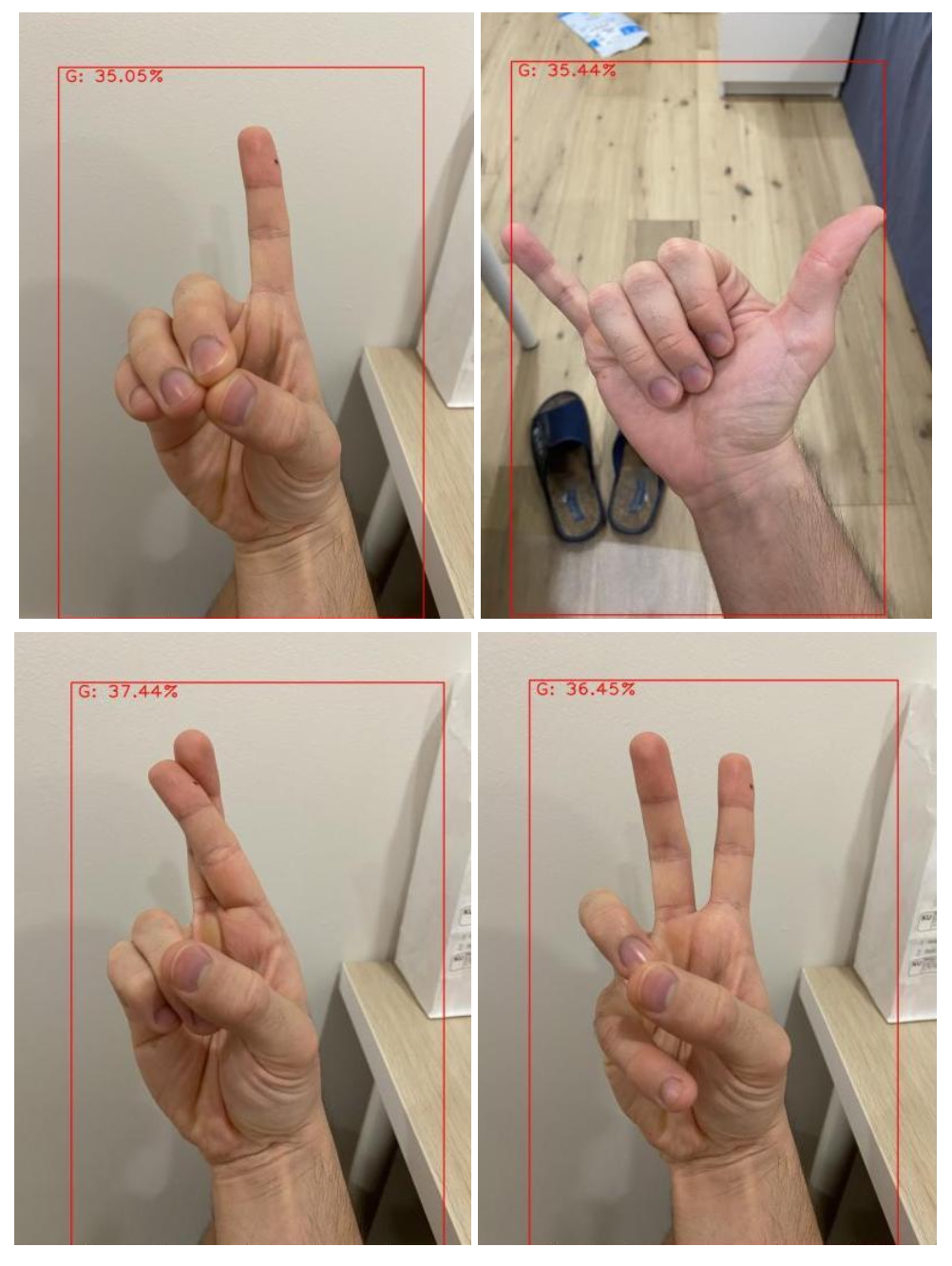

American Sign Language alphabet recognition from images. The goal was to localize the hand and classify the sign into one of 26 letters. We evaluated multiple object-detection approaches (SSD, YOLOv5, Faster R-CNN), used transfer learning, and built a simple Django API for inference demos. Key highlights:

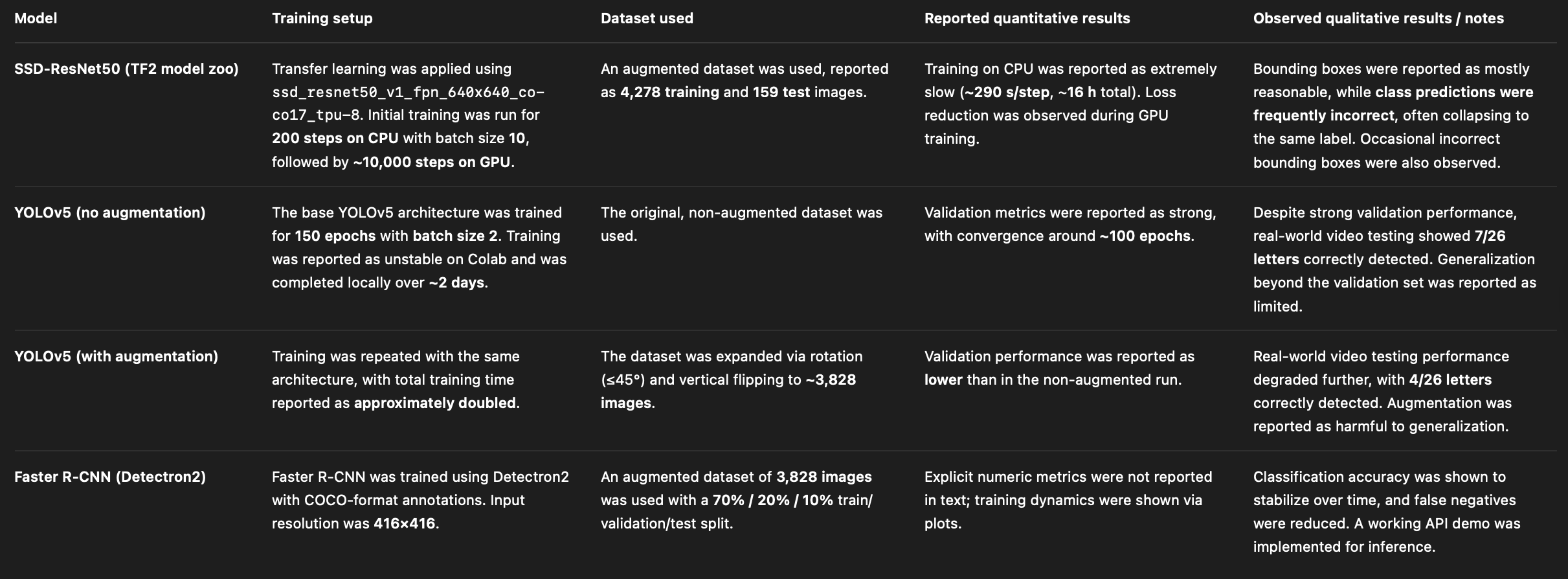

- Compared SSD-ResNet50, YOLOv5, and Faster R-CNN pipelines on the same labeled dataset

- Used Roboflow augmentation to scale the dataset and standardize labels

- Shipped a prediction endpoint returning letter, bounding box, and confidence

Quick facts

- Role: ML Engineer (Computer Vision) + Backend Developer (demo API)

- Timeframe: Not specified

- Platform: Model training + REST API demo

- Status: Completed (academic project)

- Team: Team project

Problem

- Needed both localization (bounding box) and classification (26 classes) with limited labeled data.

- Training compute was constrained early (no GPU), forcing careful iteration choices.

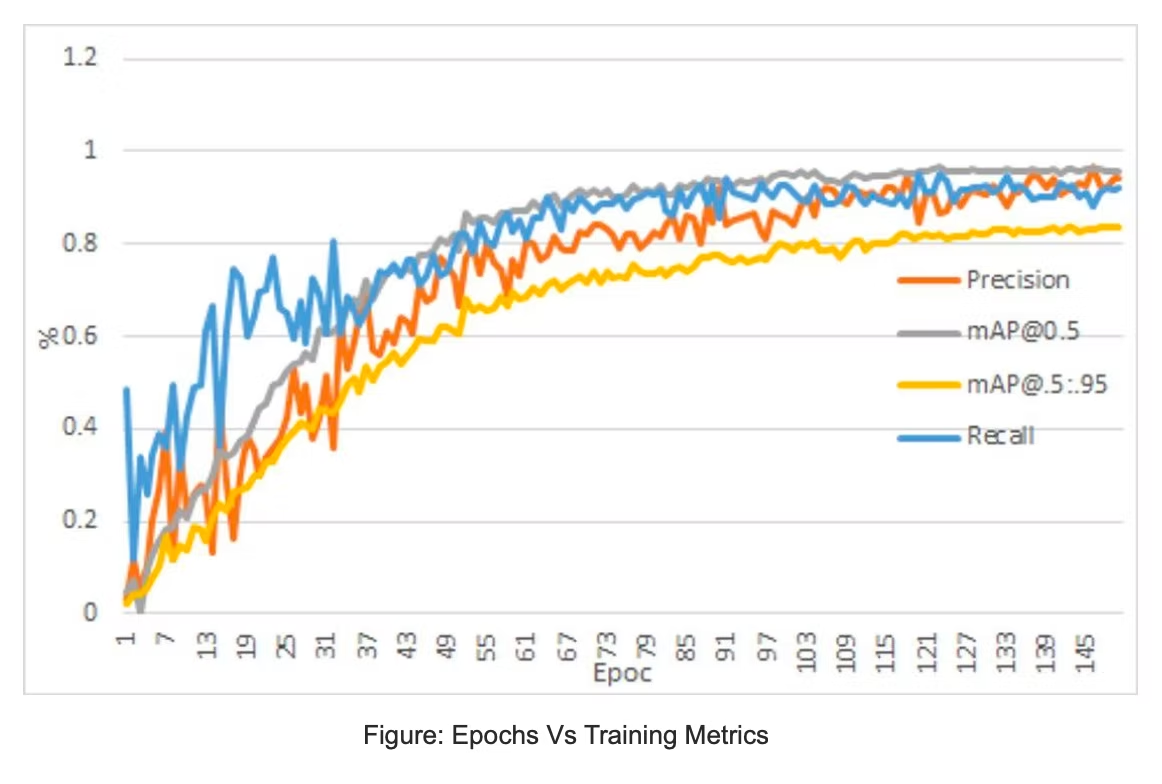

- Offline validation metrics did not translate to real-world video performance.

Solution

We framed the task as object detection and used transfer learning to accelerate progress. Data was augmented via Roboflow (rotations and flips) to increase per-class coverage. We then benchmarked SSD-ResNet50 vs YOLOv5 vs Faster R-CNN, focusing on the gap between validation metrics and live inference. For the demo, we wrapped inference behind a Django REST endpoint that accepts an image and returns the predicted letter, bounding box, and score.

- Prioritized a reproducible pipeline over a single “best” model

Architecture

- Dataset: Kaggle ASL alphabet images (26 classes) with existing annotations

- Preprocessing: Roboflow augmentation + train/val/test split + COCO/YOLO export formats

- Models: transfer learning on SSD-ResNet50 (TF Object Detection Zoo), YOLOv5, Faster R-CNN (Detectron2)

- Training: GPU-enabled runs for longer training schedules; tracked loss and detection metrics

- Evaluation: compared validation metrics vs real-world tests (video inference)

- Demo: Django API endpoint → model inference → JSON response (class, box, score)

Tech stack

- Architecture: Transfer learning, object detection (SSD / YOLOv5 / Faster R-CNN)

- Backend/Infra: Django, REST API, Detectron2, TensorFlow Object Detection Zoo

- Tooling: PyTorch, Roboflow, Kaggle dataset, Google Colab/local GPU training

Hard problems solved

- Managed compute constraints: early SSD training was too slow without GPU, forcing a model strategy change

- Diagnosed metric vs reality mismatch: high validation scores but poor live video accuracy

- Built a consistent labeling pipeline across frameworks (TF OD API, YOLO format, COCO JSON)

- Tuned augmentation to increase data volume while monitoring degradation in generalization

- Investigated failure modes separately for box regression vs class prediction (correct box, wrong class)

- Packaged inference into an API that returns structured outputs suitable for a front-end demo

Impact / Results

- Produced an end-to-end ASL alphabet detection pipeline with multiple model baselines

- Demonstrated that offline metrics can be misleading without real inference testing

- Delivered a working inference API for demo usage (image in → prediction out)